Empire AI is being built as a phased AI supercomputing environment centered on accelerated training, inference, and data-intensive research.Documentation Index

Fetch the complete documentation index at: https://docs.nyc-ai.app/llms.txt

Use this file to discover all available pages before exploring further.

Alpha system

Alpha is the first production-facing phase of the platform. Public Empire AI materials describe the October 2024 launch as a first-stage system powered by 96 advanced processors. CCR’s user documentation describes Alpha as the current onboarding and early-use environment while Beta procedures continue to mature. In practice, Alpha is where researchers validate software stacks, data paths, and job patterns before moving to larger allocations.Beta system

Beta is the major architecture jump. Empire AI announced Beta in June 2025 with a $40 million investment and described it as roughly 11 times stronger for AI training than Alpha, with about 40 times the inference throughput and 8 times the storage footprint.Beta architecture details

| Component | Specification |

|---|---|

| GPU architecture | 288 NVIDIA Blackwell GPUs |

| CPU nodes | 60 nodes with NVIDIA Grace CPU Superchips |

| Blackwell transistor count | 208 billion transistors per GPU |

| Process technology | TSMC 4NP |

| Superchip design | DGX GB200 systems built around Grace Blackwell Superchips |

| Interconnect | NVLink unified fabric |

| Rack-scale bandwidth | Up to 130 TB/s across a single NVL72 rack |

| Rack-scale AI performance | Up to 1.44 exaFLOPS at FP4 precision |

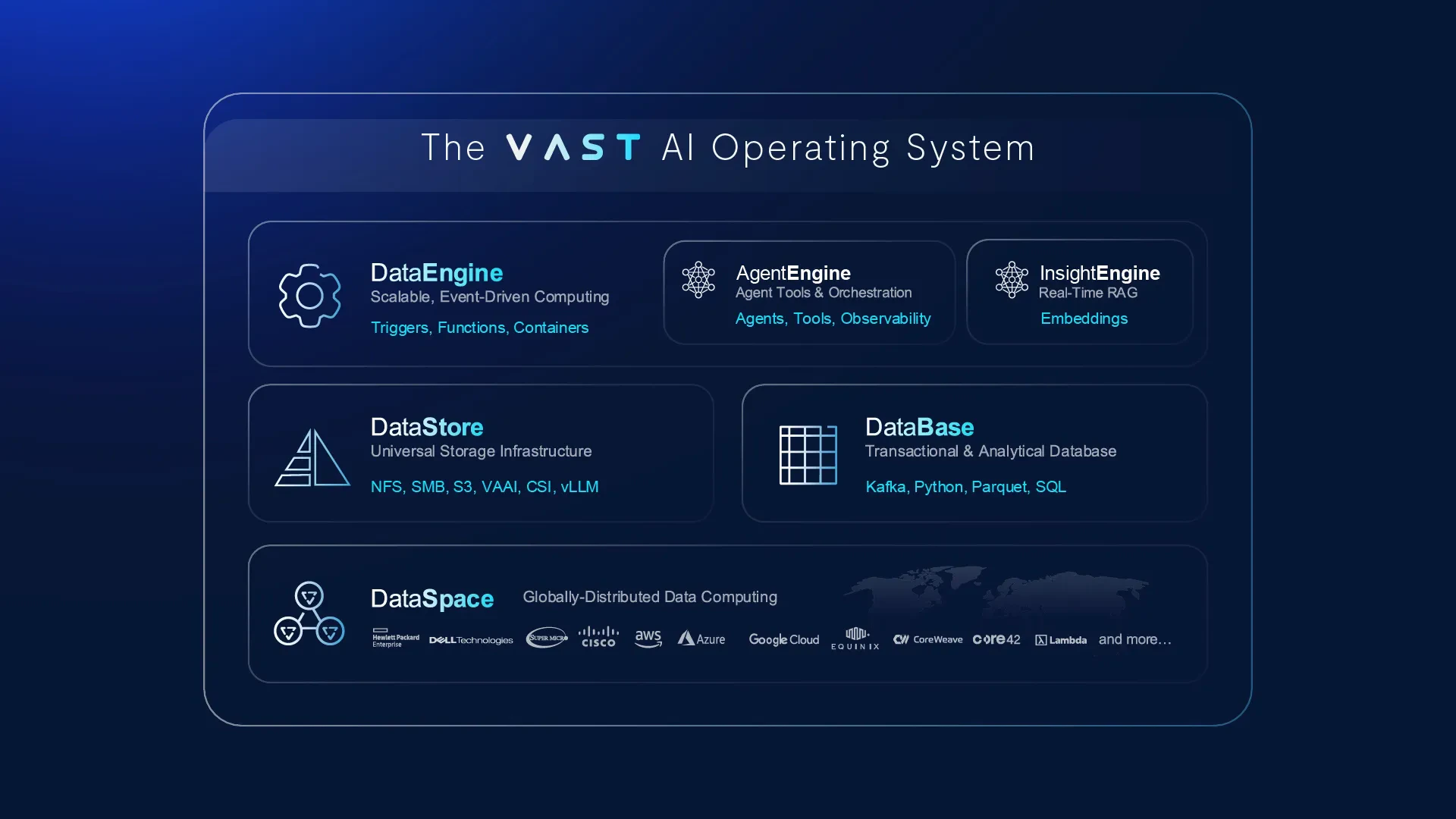

| Storage tier 1 | 20+ PB on the VAST Data AI Operating System |

| Storage tier 2 | 10+ PB on DDN’s data intelligence platform |

| Operations software | NVIDIA AI Enterprise and NVIDIA Mission Control |

Beta hardware

NVIDIA DGX SuperPOD (DGX GB200)

NVIDIA Grace CPU Superchip

VAST Data AI Operating System

DDN data intelligence platform

Why the Blackwell stack matters

The key design choice is not just “more GPUs.” Beta is tuned for modern large-model workflows:- FP4 support and the Transformer Engine make it easier to run very large model training and inference efficiently.

- High-bandwidth NVLink reduces the communication penalty that often slows distributed GPU jobs.

- Grace CPUs and large storage tiers support the data movement and preprocessing that AI pipelines depend on.